Pattern Essence

Reflection Agent is a pattern where after drafting a response, the agent does one quick quality check: it finds obvious issues (unclear wording, contradictions, overconfident claims) and fixes them once before the final answer.

Important: Reflection is a lightweight pre-send check. It is not full validation by rules or policies.

When to use it: when you need a short controlled self-check and one revision before sending.

Reflection does not replace the agent's core workflow.

It adds a short control stage:

- quickly verify whether the answer is clear, self-consistent, and not missing critical caveats

- find obvious risks

- fix only what is needed

- finish without creating a new infinite loop

Problem

Imagine an agent preparing a customer answer for a high-risk request.

The draft is generally correct but has small risks:

- overly certain wording without basis

- contradiction between paragraphs

- missing caveat

- unclear applicability boundary

Without a control pass, this draft goes directly to the user.

The most dangerous mistakes are often not blatant, but "almost invisible" in normal-looking text.

Consequences:

- trust in the answer drops

- false confidence appears

- risk of wrong decisions increases

That is the problem: even a good draft without review can go out with critical inaccuracies.

Solution

Reflection adds a reflection-policy before final delivery.

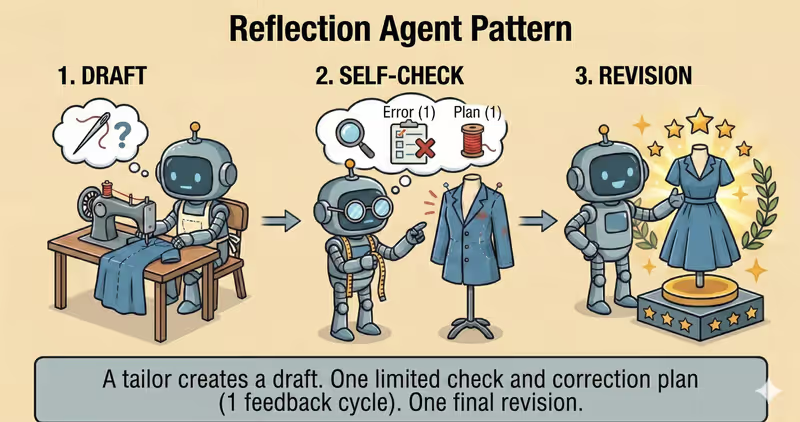

Analogy: this is like a tailor before handing over a suit. First they check the fit, then do one precise adjustment. It improves the result, but does not turn the process into endless fittings.

Core principle: one short quality check and one revision, without endless "make it even better" loops.

The agent may propose a change, but policy defines:

- whether a revision is needed

- what exactly is allowed to be changed

- when to

stop/escalate

Controlled process:

- Draft: generate first version

- Review: run one structured check

- Decision:

ok/revise/escalate - Revision: perform one

patch-onlyedit byfix_plan - Finalize: return final output without a new loop

This gives you:

- removal of obvious risks before sending

- noticeable quality gains with small overhead

- revision control within

fix_plan - protection from infinite self-edit

Works well if:

no_new_factsis enforced- changes are

patch-only - high-risk cases are escalated, not endlessly rewritten

The model may "want" to edit again and again, but reflection-policy is what ends the process within predictable bounds.

How It Works

Key rule: reflection must be bounded.

That means:

- max 1 review pass

- max 1 revision pass

- no new facts added

- revision must be

patch-onlyand only insidefix_plan - escalate on high-risk issues

Full flow: Draft → Review → Revise → Finalize

Draft

The agent creates the initial response from available context.

Review

A separate prompt checks the draft against simple points: clarity, logic, internal consistency, and overconfident claims.

Revision

If issues are found, the agent performs one revision from a structured fix_plan.

Finalize

The system returns result or stops the process if risk is too high.

In Code, It Looks Like This

draft = writer.generate(goal, context)

review = reflector.review_once(

draft=draft,

rubric=[

"no_new_facts",

"preserve_uncertainty",

"consistency_check",

],

)

if review.high_risk:

return escalate_to_human(review.reason)

if review.ok:

return draft

revised = writer.revise_once(

draft=draft,

fix_plan=review.fix_plan,

rules=["no_new_facts", "keep_scope"],

)

# optional: verify revision stayed within `fix_plan`

approved = supervisor.review_output_patch(

original=draft,

revised=revised,

allowed_changes=review.fix_plan,

)

return approved

Reflection should not run "until perfect." One review pass, one improvement via revise, and one check that revision stayed inside fix_plan.

How It Looks During Runtime

Goal: prepare an accurate answer with correct SLA wording

Draft:

"SLA for all customers is 99.99%."

Review:

- issue: too categorical claim

- issue: plan level not specified

- fix: add condition and source

Revised:

"SLA depends on plan level. For enterprise, it is 99.99% according to support policy."

Full Reflection agent example

When It Fits - And When It Doesn't

Good Fit

| Situation | Why Reflection Fits | |

|---|---|---|

| ✅ | Response goes outside and needs extra review | Reflection catches obvious issues before sending to user. |

| ✅ | Need quality gains without major latency penalty | One bounded pass often gives noticeable quality improvement. |

| ✅ | You have clear quality rubric and stop rules | Formalized criteria make self-review stable and predictable. |

| ✅ | Need more reliable final response without rewrite loop | Reflection adds a control step without triggering endless rewrites. |

Not a Fit

| Situation | Why Reflection Does Not Fit | |

|---|---|---|

| ❌ | Critically low-latency path | Even one extra pass may make response too late. |

| ❌ | No clear quality rubric | Without criteria, reflection becomes chaotic and does not improve reliably. |

| ❌ | Need external fact validation via tools or humans | Reflection does not replace fact-checking with external sources or manual review. |

Because reflection adds one more generation pass and increases response time and cost.

How It Differs From Self-Critique

| Reflection | Self-Critique | |

|---|---|---|

| Goal | Catch obvious issues before sending | Check answer by stricter rules and rewrite if needed |

| Depth | Fast quality check: is answer clear, logical, and free of obvious mistakes | Usually stricter critique and revision |

| Result type | ok/issues/fix plan | detailed risks + required changes + diff orientation |

| Risk | Becoming a second loop without limits | Excessive rewriting and higher cost |

Reflection is a quick bounded control run. Self-Critique is a deeper pass with rewriting.

When To Use Reflection Among Other Patterns

Use Reflection when you need to quickly verify an answer before sending: it should be clear, logical, and free of obvious mistakes.

Quick test:

- if you need to "briefly review and fix before final" -> Reflection

- if you need to "deeply critique by checklist and rewrite" -> Self-Critique Agent

Comparison with other patterns and examples

Quick cheat sheet:

| If the task looks like this... | Use |

|---|---|

| Need a short check before final response | Reflection Agent |

| Need deep criteria-based critique and answer rewriting | Self-Critique Agent |

| Need to recover process after timeout, exception, or tool failure | Fallback-Recovery Agent |

| Need strict policy checks before risky action | Guarded-Policy Agent |

Examples:

Reflection: "Before final response, quickly check logic, completeness, and obvious mistakes".

Self-Critique: "Evaluate response by checklist (accuracy, completeness, risks), then rewrite".

Fallback-Recovery: "If API does not respond, do retry -> fallback source -> escalation".

Guarded-Policy: "Before sending data externally, check policy: whether this is allowed".

Not sure whether a single Reflection step before response is enough? Design Your Agent →

How To Combine With Other Patterns

- Reflection + RAG: Reflection checks that conclusions actually match retrieved sources.

- Reflection + Supervisor: high-risk conclusions are not auto-fixed and are sent for human approval.

- Reflection + ReAct: after a series of ReAct steps, the agent performs a final check before answering.

In Short

Reflection Agent:

- Performs one control self-check

- Revises response once

- Adds no new facts

- Reduces risk of obvious production mistakes

Pros and Cons

Pros

checks response before sending

fixes obvious mistakes

improves clarity and structure

helps keep required format

Cons

adds one more step and latency

spends more tokens

without boundaries can overcomplicate response

FAQ

Q: Can reflection replace fact-checking and tests?

A: No. This is an additional quality layer. Fact-checking, validation, and policy controls are still required separately.

Q: Why limit number of passes?

A: Otherwise reflection becomes a second loop: latency, cost, and risk of new mistakes increase.

Q: What if reflection finds a high-risk issue?

A: Do not continue automatically. Stop the process or escalate to human review / Supervisor policy.

What Next

Reflection adds bounded quality control before final response.

What should you do when you need stricter critique and a revision process with structured change rules?