Pattern Essence

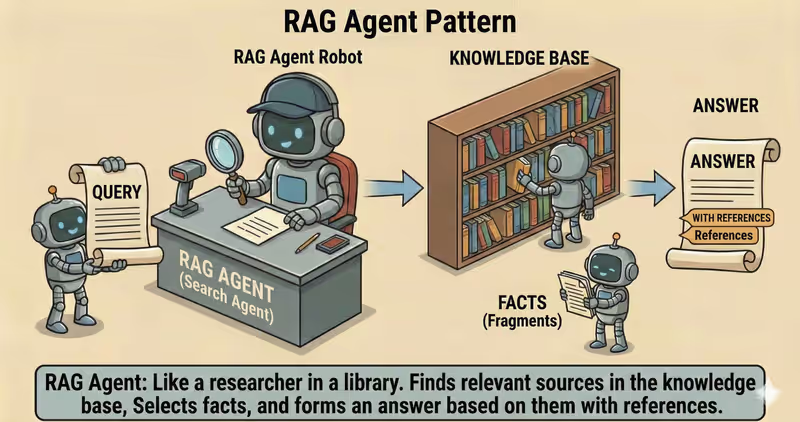

RAG Agent is a pattern where the agent first finds relevant sources, then produces the answer based on them, instead of relying only on the model's parametric memory.

When to use it: when the answer must rely on current documents and references, not only model memory.

Instead of answering "from the model's head", RAG adds a dedicated step:

- find facts in the knowledge base

- select the most relevant fragments

- provide an answer with citations

Problem

Imagine a user asks:

"What is the SLA for the enterprise plan?"

The agent answers without a retrieval step, using only model memory.

The text may sound confident but remain weakly verified:

- outdated value from a previous policy version

- mixed facts from different documents

- no source to verify against

- "precise" wording without evidence

Without controlled retrieval, even a plausible answer may be ungrounded.

This is especially risky for support, compliance, internal policy, and technical documentation.

That is the core issue: without source grounding, the agent can produce a convincing but unverified answer that is hard to audit.

Solution

RAG adds a grounding-policy that controls retrieval before generation.

Analogy: this is like answering with an open book. First you find the right pages, then you formulate the answer. If sources are missing, it is better to clarify the request than to invent.

Key principle: first find and verify sources, then generate the answer.

The agent may propose text, but grounding-policy determines:

- which sources are valid

- what can be included in the answer

- when a fallback scenario is required instead of "filling gaps"

Controlled process:

- Retrieve: find relevant fragments

- Rank/filter: remove noise and duplicates

- Ground to sources: build allowed context

- Generate: answer only within that context

- Cite: attach links/metadata to claims

This gives you:

- lower hallucination risk for factual queries

- answer grounding in documents

- verifiability and auditability

- fresher answers when docs change

Works well if:

- retrieval has a quality index + metadata

- ranking consistently filters noise

- model does not answer outside grounded context

- when sources are absent, a safe fallback scenario is triggered

The model may "want" to answer from memory, but the RAG layer decides whether there is enough evidence.

How It Works

RAG does not replace the agent. It adds a knowledge layer before answer generation.

Key idea: if relevant context is missing, the system must not "invent an answer".

Full flow description: Retrieve → Ground → Generate → Cite

Retrieve

The system searches candidates in the knowledge base based on the user query.

Ground to sources

Selected fragments are passed as the single allowed context for answer generation.

Generate

The agent generates an answer only from the provided context. Any information outside it is considered disallowed.

Cite

Sources are attached to the final output: links, document name, version, or timestamp.

In Code It Looks Like This

chunks = retrieve(goal, top_k=8, filters={"tenant_id": tenant_id})

context = rerank_and_pack(goal, chunks, max_tokens=2500)

if not context:

return ask_clarifying_or_fallback(goal) # no relevant context -> do not generate an answer

answer = generate_grounded_answer(goal, context) # generate only from retrieved sources

answer = attach_citations(answer, context)

return answer

What It Looks Like During Execution

Goal: What is the SLA for the enterprise plan?

Retrieve:

- found 6 fragments in support policies

- after rerank, kept 2 relevant ones

- if relevant fragments = 0 -> clarifying question / fallback instead of an invented answer

Ground:

- built context from two excerpts

- added metadata: doc_id, section, updated_at

Generate:

- answer generated only from those sources

Cite:

- added link to "Support Policy v3.2"

Full RAG agent example

When It Fits - and When It Does Not

Good Fit

| Situation | Why RAG Fits | |

|---|---|---|

| ✅ | Factual accuracy and source links are important | RAG ties the answer to specific documents and makes verification easier. |

| ✅ | Knowledge changes frequently | Retrieval pulls fresh data without model retraining. |

| ✅ | Answer must be grounded in internal materials | RAG lets you use corporate documents as the answer foundation. |

| ✅ | You need to reduce hallucinations | Grounded context lowers the share of answers without factual support. |

| ✅ | Result must be auditable | You can log retrieved sources and explain what the answer is based on. |

Not a Good Fit

| Situation | Why RAG Does Not Fit | |

|---|---|---|

| ❌ | Task does not depend on external knowledge | Retrieval adds overhead without noticeable result improvement. |

| ❌ | No quality knowledge base and metadata | A weak index and low-quality docs produce irrelevant retrieval. |

| ❌ | Only short generation is needed without fact-checking | In that case RAG complicates the system and increases latency. |

Because RAG adds extra indexing, retrieval, and ranking steps.

How It Differs from ReAct

| ReAct | RAG | |

|---|---|---|

| Main role | Stepwise decision making | Supplying relevant knowledge into context |

| Key question | What should we do next? | Which sources should the answer rely on? |

| Focus | Actions and tools | Facts and grounded generation |

| Risk without guardrails | Excess tool calls or loops | Hallucinations when retrieval is weak |

ReAct controls the agent action loop.

RAG controls the quality of knowledge used to produce the answer.

When to Use RAG (vs Other Patterns)

Use RAG when the answer must rely on external documents or a knowledge base for the current request.

Quick test:

- if you need to "find relevant sources and answer based on them" -> RAG

- if you need to "remember user context across steps or sessions" -> Memory-Augmented Agent

Comparison with other patterns and examples

Quick cheatsheet:

| If the task looks like this... | Use |

|---|---|

| Need to find knowledge in external sources and generate an answer from it | RAG Agent |

| Need to store and use user context across steps or sessions | Memory-Augmented Agent |

Examples:

RAG: "Answer customer questions only from the internal policy base and show sources."

Memory-Augmented: "Remember that the customer already chose the Pro plan and use that in follow-up responses."

Not sure whether your case really needs RAG and external knowledge? Design Your Agent →

How to Combine with Other Patterns

- RAG + ReAct: first the agent retrieves facts from sources, then executes steps on verified context.

- RAG + Supervisor: if valid sources are missing, the answer is blocked or routed for approval.

- RAG + Multi-Agent Collaboration: all agents share the same knowledge context and work consistently.

In Short

RAG Agent:

- Retrieves relevant knowledge fragments

- Builds the answer based on them

- Adds source citations

- Reduces hallucination risk

Pros and Cons

Pros

answers based on your documents

fewer model fabrications

can show sources in the answer

knowledge updates without retraining the model

Cons

quality depends on index and chunking

knowledge base requires maintenance

without filters it can pull irrelevant fragments

FAQ

Q: Does RAG guarantee a 100% correct answer?

A: No. RAG lowers error risk, but quality still depends on indexing, retrieval, and ranking quality.

Q: What if no relevant sources are found?

A: You need a safe fallback scenario: clarifying question, reasoned refusal, or human escalation.

Q: Does RAG replace fine-tuning?

A: No. RAG handles access to fresh knowledge. Fine-tuning changes model style or behavior. In production they are often combined.

What Next

RAG gives the agent fresh external knowledge for the current request.

But how do you keep useful interaction context across user sessions?