Pattern essence

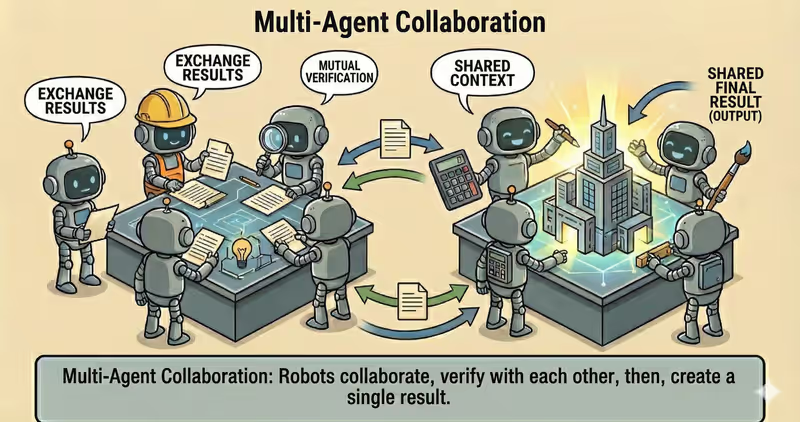

Multi-Agent Collaboration is a pattern where multiple agents work toward one goal, exchange results, review each other, and produce one shared final output.

When to use it: when a task needs multiple roles or domains of expertise plus mutual validation.

Instead of a "single agent does everything" model, the system:

- distributes roles across agents

- organizes shared context

- runs multiple rounds of interaction

- collects an aligned result

Problem

Imagine you need to prepare an investment report.

At the same time, you must:

- evaluate the market and competitors

- calculate the financial model

- review legal risks

- combine everything into one coherent conclusion

When one agent does all of this, it constantly switches roles and loses focus.

A single agent usually gives depth in one part or keeps the big picture, but rarely does both well.

That often leads to:

- important details missed

- mixed roles: analyst, legal reviewer, and editor in one response

- contradictions between document sections

- final text looks coherent, but is weakly validated

That is the problem: for a complex multi-domain task, one agent is usually not enough.

Solution

Multi-Agent Collaboration distributes work across specialized agents and converges the result in rounds.

Analogy: this is like a specialist council. Each person owns a specific part, then the team cross-checks conclusions. The final result appears after alignment, not from one opinion.

Key principle: the key is not the number of agents, but clear roles, intermediate result exchange, and alignment rules.

Each agent produces a partial result, while the collaboration layer manages the process:

- Assign Roles: distribute responsibility by domain

- Work: get results from each agent

- Exchange/Review: mutually review and refine

- Resolve: remove conflicts between conclusions

- Synthesize: build the final shared output

This gives:

- more depth in each domain

- mutual cross-checking between agents

- fewer missed aspects

- aligned final conclusion

Works well if:

- roles and boundaries are explicit

- shared state and message format are structured

- the number of rounds is capped (

max_rounds) - resolve rules and ready criteria are defined

The model may "want" to keep refining forever, but collaboration-policy is what stops the process within defined bounds.

How it works

Agents are not isolated from each other.

They interact through shared state: a blackboard, shared memory, or a structured message queue.

Full flow description: Assign Roles → Work → Exchange → Synthesize

Assign Roles

The system defines ownership: research, analysis, validation, synthesis.

Work

Each agent adds its contribution to shared state.

Exchange

Agents read outputs from others, add comments, clarify contradictions, and improve shared state.

Synthesize

After several rounds, the system assembles one aligned final output.

In code it looks like this

agents = [research_agent, finance_agent, risk_agent]

board = {"goal": goal, "draft": {}, "notes": []}

def find_conflicts(draft):

# Simplified example: if agent conclusions differ, treat it as a conflict.

summaries = {str(value).strip().lower() for value in draft.values()}

return [] if len(summaries) <= 1 else ["conclusion alignment required"]

for round_no in range(1, max_rounds + 1):

# 1) Each agent adds its part to the shared board

board["draft"]["research"] = research_agent.work(board)

board["draft"]["finance"] = finance_agent.work(board)

board["draft"]["risk"] = risk_agent.work(board)

# 2) Detect where conclusions contradict each other

conflicts = find_conflicts(board["draft"])

# 3) If there are no conflicts - finish

if not conflicts:

break

# 4) If conflicts exist - store them and run next round

board["notes"].append(conflicts)

final_report = build_final_report(board)

return final_report

In short: agents do not work "independently" - they use a shared board where all contributions and conflicts are visible.

How it looks during execution

Goal: prepare a final report for a company due-diligence review

Round 1:

- Research Agent: added market overview

- Finance Agent: added financial calculations

- Risk Agent: added legal risks

- System found a conflict: "optimistic growth forecast" vs "regulatory constraints"

- Between rounds: conflict stored in board["notes"] and sent back for refinement

Round 2:

- Finance Agent recalculated the model with risk constraints

- Research Agent clarified competitor data

- Risk Agent validated updated assumptions

- System found a new conflict: "fast market entry" vs "need for additional regulatory compliance"

- Between rounds: disputed points sent for another refinement pass

Round 3:

- Agents aligned the remaining contradictions

- No conflicts

- System assembles the final report

Full Multi-Agent Collaboration example

When it fits - and when it does not

Fits

| Situation | Why this pattern fits | |

|---|---|---|

| ✅ | Multi-domain task requiring different expertise | Each agent contributes specialization, and results are combined into one decision. |

| ✅ | Quality matters more than minimum latency | Additional alignment rounds improve quality, even though response time increases. |

| ✅ | Mutual validation of results is required | Agents can find each other's gaps through cross-review. |

| ✅ | One agent often misses aspects | Collaboration reduces blind spots through different roles and perspectives. |

Does not fit

| Situation | Why this pattern does not fit | |

|---|---|---|

| ❌ | Task is simple and repetitive | Coordination overhead is larger than practical benefit. |

| ❌ | Critically low response-time requirement | Multiple exchange rounds increase latency. |

| ❌ | No infrastructure for shared state and synchronization | Without shared context and coordination, agent outputs are hard to align. |

Because collaboration adds extra communication rounds and coordination overhead.

How it differs from Orchestrator

| Orchestrator | Multi-Agent Collaboration | |

|---|---|---|

| Structure | Central coordinator | Shared work by multiple agents in rounds |

| Interaction type | Mainly subtask delegation | Intermediate exchange and mutual review |

| Optimization target | Execution speed and flow control | Decision quality and multi-domain alignment |

| Risk | Wrong dispatch | Conflicts between agent responses |

Orchestrator answers: "how to distribute work".

Multi-Agent Collaboration answers: "how agents align one shared decision together".

When to use Multi-Agent Collaboration among other patterns

Use Multi-Agent Collaboration when multiple agents must produce one shared output and their conclusions can conflict.

Quick test:

- if you need to "align different views into one final conclusion" -> Multi-Agent Collaboration

- if it is enough to "pass tasks through steps and collect output" -> Orchestrator Agent

Comparison with other patterns and examples

Quick cheat sheet:

| If the task looks like this... | Use |

|---|---|

| Need to choose one best executor | Routing Agent |

| There is a sequence of steps and order matters | Orchestrator Agent |

| A policy-check is required before result | Supervisor Agent |

| Multiple agents must reach one conclusion | Multi-Agent Collaboration |

Examples:

Routing: "Customer asks for a refund - send to Billing, not Sales".

Orchestrator: "Prepare a release: first changelog, then QA, then deploy".

Supervisor: "Before sending an email, check policies, compliance, and prohibited promises".

Multi-Agent Collaboration: "Marketing, Legal, and Product must align one final campaign text".

Not sure whether you really need multi-agent collaboration? Design Your Agent →

How to combine with other patterns

- Collaboration + Supervisor: safety rules are checked for each agent and each collaboration round.

- Collaboration + Orchestrator: Orchestrator synchronizes ordering and dependencies between agent groups.

- Collaboration + RAG: all agents work from one validated knowledge base to reduce contradictions.

In short

Multi-Agent Collaboration:

- Distributes roles across agents

- Organizes exchange of intermediate results

- Runs several alignment rounds

- Produces one shared final output

Pros and Cons

Pros

distributes work across specialized agents

solves complex tasks faster

easier to scale the process

result can be validated at multiple stages

Cons

coordination between agents is harder

higher token and infrastructure cost

harder to find root cause of errors

FAQ

Q: Do agents have to communicate directly?

A: No. Most commonly they interact through shared state or a message bus.

Q: How to avoid "noise" between agents?

A: Define clear roles, message format, round limit (max_rounds), and completion criteria.

Q: What if agents disagree?

A: Add conflict resolution: voting, lead-agent arbitration, Supervisor policy-check, or human approval.

What next

Multi-Agent Collaboration helps align work between multiple agents.

But where should all agents get one consistent and validated knowledge source during execution?